Merge pull request #831 from shocknet/script

Install script, test, and dep enhancements

This commit is contained in:

commit

403066efa9

24 changed files with 2663 additions and 2263 deletions

31

.github/workflows/test.yaml

vendored

31

.github/workflows/test.yaml

vendored

|

|

@ -9,8 +9,39 @@ jobs:

|

|||

run: unzip src/tests/regtestNetwork.zip

|

||||

- name: list files

|

||||

run: ls -la

|

||||

- name: Remove expired certificates and keys

|

||||

run: |

|

||||

echo "Removing expired certificates and keys so LND will generate fresh ones..."

|

||||

rm -f volumes/lnd/alice/tls.cert volumes/lnd/alice/tls.key

|

||||

rm -f volumes/lnd/bob/tls.cert volumes/lnd/bob/tls.key

|

||||

rm -f volumes/lnd/carol/tls.cert volumes/lnd/carol/tls.key

|

||||

rm -f volumes/lnd/dave/tls.cert volumes/lnd/dave/tls.key

|

||||

- name: Build the stack

|

||||

run: docker compose --project-directory ./ -f src/tests/docker-compose.yml up -d

|

||||

- name: Check container status

|

||||

run: |

|

||||

echo "Container status:"

|

||||

docker ps -a

|

||||

echo "Alice logs:"

|

||||

docker logs polar-n2-alice --tail 20 || true

|

||||

echo "Bob logs:"

|

||||

docker logs polar-n2-bob --tail 20 || true

|

||||

- name: Wait for LND containers to be ready

|

||||

run: |

|

||||

echo "Waiting for LND containers to start and generate certificates..."

|

||||

sleep 30

|

||||

# Wait for containers to be running first

|

||||

echo "Waiting for containers to be running..."

|

||||

timeout 120 bash -c 'until docker ps --filter "name=polar-n2-alice" --filter "status=running" --format "{{.Names}}" | grep -q polar-n2-alice; do sleep 5; done'

|

||||

timeout 120 bash -c 'until docker ps --filter "name=polar-n2-bob" --filter "status=running" --format "{{.Names}}" | grep -q polar-n2-bob; do sleep 5; done'

|

||||

timeout 120 bash -c 'until docker ps --filter "name=polar-n2-carol" --filter "status=running" --format "{{.Names}}" | grep -q polar-n2-carol; do sleep 5; done'

|

||||

timeout 120 bash -c 'until docker ps --filter "name=polar-n2-dave" --filter "status=running" --format "{{.Names}}" | grep -q polar-n2-dave; do sleep 5; done'

|

||||

echo "Containers are running, waiting for certificates..."

|

||||

# Wait for certificates to be generated

|

||||

timeout 120 bash -c 'until docker exec polar-n2-alice test -f /home/lnd/.lnd/tls.cert; do sleep 5; done'

|

||||

timeout 120 bash -c 'until docker exec polar-n2-bob test -f /home/lnd/.lnd/tls.cert; do sleep 5; done'

|

||||

timeout 120 bash -c 'until docker exec polar-n2-carol test -f /home/lnd/.lnd/tls.cert; do sleep 5; done'

|

||||

timeout 120 bash -c 'until docker exec polar-n2-dave test -f /home/lnd/.lnd/tls.cert; do sleep 5; done'

|

||||

- name: Copy alice cert file

|

||||

run: docker cp polar-n2-alice:/home/lnd/.lnd/tls.cert alice-tls.cert

|

||||

- name: Copy bob cert file

|

||||

|

|

|

|||

24

DOCKER.md

Normal file

24

DOCKER.md

Normal file

|

|

@ -0,0 +1,24 @@

|

|||

# Docker Installation

|

||||

|

||||

> [!WARNING]

|

||||

> The Docker deployment method is currently unmaintained and may not work as expected. Help is wanted! If you are a Docker enjoyer, please consider contributing to this deployment method.

|

||||

|

||||

1. Pull the Docker image:

|

||||

|

||||

```ssh

|

||||

docker pull ghcr.io/shocknet/lightning-pub:latest

|

||||

```

|

||||

|

||||

2. Run the Docker container:

|

||||

|

||||

```ssh

|

||||

docker run -d \

|

||||

--name lightning-pub \

|

||||

--network host \

|

||||

-p 1776:1776 \

|

||||

-p 1777:1777 \

|

||||

-v /path/to/local/data:/app/data \

|

||||

-v $HOME/.lnd:/root/.lnd \

|

||||

ghcr.io/shocknet/lightning-pub:latest

|

||||

```

|

||||

Network host is used so the service can reach a local LND via localhost. LND is assumed to be under the users home folder, update this location as needed.

|

||||

61

README.md

61

README.md

|

|

@ -70,47 +70,52 @@ Dashboard Wireframe:

|

|||

|

||||

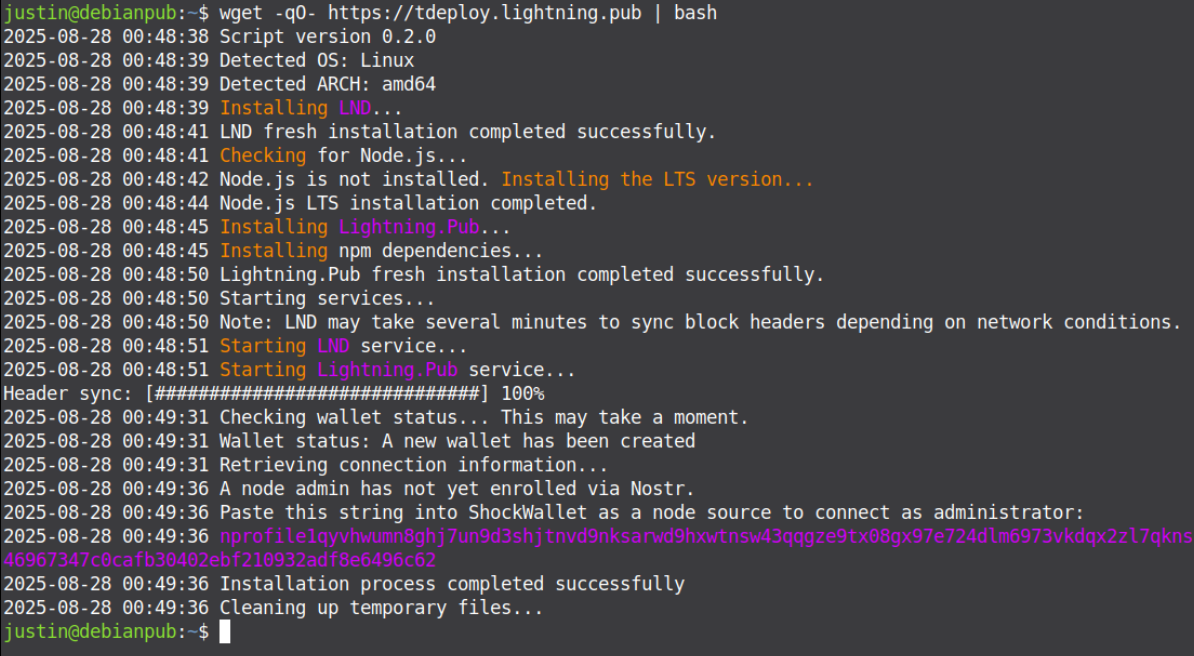

Paste one-line and have a Pub node in under 2 minutes. It uses neutrino so you can run it on a $5 VPS or old laptop.

|

||||

|

||||

This method installs all dependencies and creates systemd entries. It has been tested only in Ubuntu/Debian x64 environments, but is general enough that it should work on any linux system with systemd.

|

||||

This method installs all dependencies and creates user-level systemd services.

|

||||

|

||||

Mac support is rough'd in, but completely untested. Help wanted.

|

||||

**Platform Support:**

|

||||

- ✅ **Debian/Ubuntu**: Fully tested and supported

|

||||

- ⚠️ **Arch/Fedora**: Should work but untested - please report issues

|

||||

- 🚧 **macOS**: Basic support stubbed in but completely untested - help wanted

|

||||

|

||||

To start, run the following command:

|

||||

|

||||

```ssh

|

||||

sudo wget -qO- https://deploy.lightning.pub | sudo bash

|

||||

```bash

|

||||

wget -qO- https://deploy.lightning.pub | bash

|

||||

```

|

||||

|

||||

It should look like this in a minute or so

|

||||

|

||||

|

||||

|

||||

**Note:** The installation is now confined to user-space, meaning:

|

||||

- No sudo required for installation

|

||||

- All data stored in `$HOME/lightning_pub/`

|

||||

- Logs available at `$HOME/lightning_pub/install.log`

|

||||

|

||||

**⚠️ Migration from Previous Versions:**

|

||||

Previous system-wide installations (as of 8.27.2025) need some manual intervention:

|

||||

1. Stop existing services: `sudo systemctl stop lnd lightning_pub`

|

||||

2. Disable services: `sudo systemctl disable lnd lightning_pub`

|

||||

3. Remove old systemd units: `sudo rm /etc/systemd/system/lnd.service /etc/systemd/system/lightning_pub.service`

|

||||

4. Reload systemd: `sudo systemctl daemon-reload`

|

||||

5. Run the new installer: `wget -qO- https://deploy.lightning.pub | bash`

|

||||

|

||||

Please report any issues to the [issue tracker](https://github.com/shocknet/Lightning.Pub/issues).

|

||||

|

||||

#### Automatic updates

|

||||

|

||||

These are controversial to push by default and we're leaning against it. You can however add the line to cron to run it periodically and it will handle updating.

|

||||

These are controversial, so we don't include them. You can however add a line to your crontab to re-run the installer on your time preference and it will gracefully handle updating:

|

||||

|

||||

```bash

|

||||

# Add to user's crontab (crontab -e) - runs weekly on Sunday at 2 AM

|

||||

0 2 * * 0 wget -qO- https://deploy.lightning.pub | bash

|

||||

```

|

||||

|

||||

**Note:** The installer will only restart services if version checks deem necessary.

|

||||

|

||||

### Docker Installation

|

||||

|

||||

1. Pull the Docker image:

|

||||

|

||||

```ssh

|

||||

docker pull ghcr.io/shocknet/lightning-pub:latest

|

||||

```

|

||||

|

||||

2. Run the Docker container:

|

||||

|

||||

```ssh

|

||||

docker run -d \

|

||||

--name lightning-pub \

|

||||

--network host \

|

||||

-p 1776:1776 \

|

||||

-p 1777:1777 \

|

||||

-v /path/to/local/data:/app/data \

|

||||

-v $HOME/.lnd:/root/.lnd \

|

||||

ghcr.io/shocknet/lightning-pub:latest

|

||||

```

|

||||

Network host is used so the service can reach a local LND via localhost. LND is assumed to be under the users home folder, update this location as needed.

|

||||

See the [Docker Installation Guide](DOCKER.md).

|

||||

|

||||

### Manual CLI Installation

|

||||

|

||||

|

|

@ -140,9 +145,11 @@ npm start

|

|||

|

||||

## Usage Notes

|

||||

|

||||

Connect with [wallet2](https://github.com/shocknet/wallet2) using the wallet admin string that gets logged at startup. The nprofile of the node can also be used to send invitation links to guests.

|

||||

Connect with ShockWallet ([wallet2](https://github.com/shocknet/wallet2)) using the wallet admin string that gets logged at startup. Simply copy/paste the string into the node connection screen.

|

||||

|

||||

Note that connecting with wallet will create an account on the node, it will not show or have access to the full LND balance.

|

||||

The nprofile of the node can also be used to send invitation links to guests via the web version of ShockWallet.

|

||||

|

||||

**Note that connecting with wallet will create an account on the node, it will not show or have access to the full LND balance. Allocating existing funds to the admin user will be added to the operator dashboard in a future release.**

|

||||

|

||||

Additional docs are WIP at [docs.shock.network](https://docs.shock.network)

|

||||

|

||||

|

|

@ -156,4 +163,4 @@ Additional docs are WIP at [docs.shock.network](https://docs.shock.network)

|

|||

## Warning

|

||||

|

||||

> [!WARNING]

|

||||

> While this software has been used in a high-profile production environment for over a year, it should still be considered bleeding edge. Special care has been taken to mitigate the risk of drainage attacks, which is a common risk to all Lightning API's. An integrated Watchdog service will terminate spends if it detects a discrepency between LND and the database, for this reason **IT IS NOT RECOMMENDED TO USE PUB ALONGSIDE OTHER ACCOUNT SYSTEMS**. While we give the utmost care and attention to security, **the internet is an adversarial environment and SECURITY/RELIABILITY ARE NOT GUARANTEED- USE AT YOUR OWN RISK**.

|

||||

> While this software has been used in a high-profile production environment for several years, it should still be considered bleeding edge. Special care has been taken to mitigate the risk of drainage attacks, which is a common risk to all Lightning APIs. An integrated Watchdog service will terminate spends if it detects a discrepancy between LND and the database, for this reason **IT IS NOT RECOMMENDED TO USE PUB ALONGSIDE OTHER ACCOUNT SYSTEMS** such as AlbyHub, LNBits, or BTCPay - this watchdog may however be disabled. While we give the utmost care and attention to security, **the internet is an adversarial environment and SECURITY/RELIABILITY ARE NOT GUARANTEED- USE AT YOUR OWN RISK**.

|

||||

|

|

|

|||

|

|

@ -37,7 +37,7 @@ import { OldSomethingLeftover1753106599604 } from './build/src/services/storage/

|

|||

import { UserReceivingInvoiceIdx1753109184611 } from './build/src/services/storage/migrations/1753109184611-user_receiving_invoice_idx.js'

|

||||

|

||||

export default new DataSource({

|

||||

type: "sqlite",

|

||||

type: "better-sqlite3",

|

||||

database: "db.sqlite",

|

||||

// logging: true,

|

||||

migrations: [Initial1703170309875, LspOrder1718387847693, LiquidityProvider1719335699480, LndNodeInfo1720187506189, CreateInviteTokenTable1721751414878,

|

||||

|

|

|

|||

|

|

@ -8,6 +8,7 @@

|

|||

#LND_ADDRESS=127.0.0.1:10009

|

||||

#LND_CERT_PATH=~/.lnd/tls.cert

|

||||

#LND_MACAROON_PATH=~/.lnd/data/chain/bitcoin/mainnet/admin.macaroon

|

||||

#LND_LOG_DIR=~/.lnd/logs/bitcoin/mainnet/lnd.log

|

||||

|

||||

#BOOTSTRAP_PEER

|

||||

# A trusted peer that will hold a node-level account until channel automation becomes affordable

|

||||

|

|

|

|||

|

|

@ -10,7 +10,7 @@ import { HtlcCount1724266887195 } from './build/src/services/storage/migrations/

|

|||

import { BalanceEvents1724860966825 } from './build/src/services/storage/migrations/1724860966825-balance_events.js'

|

||||

|

||||

export default new DataSource({

|

||||

type: "sqlite",

|

||||

type: "better-sqlite3",

|

||||

database: "metrics.sqlite",

|

||||

entities: [BalanceEvent, ChannelBalanceEvent, ChannelRouting, RootOperation, ChannelEvent],

|

||||

migrations: [LndMetrics1703170330183, ChannelRouting1709316653538, HtlcCount1724266887195, BalanceEvents1724860966825]

|

||||

|

|

|

|||

BIN

one-liner.png

BIN

one-liner.png

Binary file not shown.

|

Before Width: | Height: | Size: 152 KiB After Width: | Height: | Size: 458 KiB |

4029

package-lock.json

generated

4029

package-lock.json

generated

File diff suppressed because it is too large

Load diff

11

package.json

11

package.json

|

|

@ -31,13 +31,14 @@

|

|||

"@protobuf-ts/grpc-transport": "^2.9.4",

|

||||

"@protobuf-ts/plugin": "^2.5.0",

|

||||

"@protobuf-ts/runtime": "^2.5.0",

|

||||

"@shocknet/clink-sdk": "^1.1.7",

|

||||

"@shocknet/clink-sdk": "^1.3.1",

|

||||

"@stablelib/xchacha20": "^1.0.1",

|

||||

"@types/express": "^4.17.21",

|

||||

"@types/node": "^17.0.31",

|

||||

"@types/secp256k1": "^4.0.3",

|

||||

"axios": "^1.9.0",

|

||||

"bech32": "^2.0.0",

|

||||

"better-sqlite3": "^12.2.0",

|

||||

"bitcoin-core": "^4.2.0",

|

||||

"chai": "^4.3.7",

|

||||

"chai-string": "^1.5.0",

|

||||

|

|

@ -57,15 +58,12 @@

|

|||

"rimraf": "^3.0.2",

|

||||

"rxjs": "^7.5.5",

|

||||

"secp256k1": "^5.0.1",

|

||||

"sqlite3": "^5.1.7",

|

||||

"ts-node": "^10.7.0",

|

||||

"ts-proto": "^1.131.2",

|

||||

"typeorm": "0.3.15",

|

||||

"typeorm": "^0.3.26",

|

||||

"typescript": "^5.5.4",

|

||||

"uri-template": "^2.0.0",

|

||||

"uuid": "^8.3.2",

|

||||

"websocket": "^1.0.35",

|

||||

"websocket-polyfill": "^0.0.3",

|

||||

"why-is-node-running": "^3.2.0",

|

||||

"wrtc": "^0.4.7",

|

||||

"ws": "^8.18.0",

|

||||

|

|

@ -86,5 +84,6 @@

|

|||

"nodemon": "^2.0.20",

|

||||

"ts-node": "10.7.0",

|

||||

"typescript": "5.5.4"

|

||||

}

|

||||

},

|

||||

"overrides": {}

|

||||

}

|

||||

|

|

@ -1,50 +1,84 @@

|

|||

#!/bin/bash

|

||||

|

||||

get_log_info() {

|

||||

if [ "$EUID" -eq 0 ]; then

|

||||

USER_HOME=$(getent passwd ${SUDO_USER} | cut -d: -f6)

|

||||

USER_NAME=$SUDO_USER

|

||||

else

|

||||

USER_HOME=$HOME

|

||||

USER_NAME=$(whoami)

|

||||

fi

|

||||

|

||||

LOG_DIR="$USER_HOME/lightning_pub/logs"

|

||||

DATA_DIR="$USER_HOME/lightning_pub/"

|

||||

LOG_DIR="$INSTALL_DIR/logs"

|

||||

DATA_DIR="$INSTALL_DIR/"

|

||||

START_TIME=$(date +%s)

|

||||

MAX_WAIT_TIME=120 # Maximum wait time in seconds

|

||||

MAX_WAIT_TIME=360 # Maximum wait time in seconds (6 minutes)

|

||||

WAIT_INTERVAL=5 # Time to wait between checks in seconds

|

||||

|

||||

log "Checking wallet status... This may take a moment."

|

||||

if [ -z "$TIMESTAMP_FILE" ] || [ ! -f "$TIMESTAMP_FILE" ]; then

|

||||

log "Error: TIMESTAMP_FILE not set or found. Cannot determine new logs."

|

||||

exit 1

|

||||

fi

|

||||

|

||||

# Wait for unlocker log file

|

||||

# Get the modification time of the timestamp file as a UNIX timestamp

|

||||

ref_timestamp=$(stat -c %Y "$TIMESTAMP_FILE")

|

||||

|

||||

# Wait for a new unlocker log file to be created

|

||||

while [ $(($(date +%s) - START_TIME)) -lt $MAX_WAIT_TIME ]; do

|

||||

latest_unlocker_log=$(ls -1t ${LOG_DIR}/components/unlocker_*.log 2>/dev/null | head -n 1)

|

||||

[ -n "$latest_unlocker_log" ] && break

|

||||

latest_unlocker_log=""

|

||||

# Loop through log files and check their modification time

|

||||

for log_file in "${LOG_DIR}/components/"unlocker_*.log; do

|

||||

if [ -f "$log_file" ]; then

|

||||

file_timestamp=$(stat -c %Y "$log_file")

|

||||

if [ "$file_timestamp" -gt "$ref_timestamp" ]; then

|

||||

latest_unlocker_log="$log_file"

|

||||

break # Found the newest log file

|

||||

fi

|

||||

fi

|

||||

done

|

||||

|

||||

if [ -n "$latest_unlocker_log" ]; then

|

||||

break

|

||||

fi

|

||||

sleep $WAIT_INTERVAL

|

||||

done

|

||||

|

||||

if [ -z "$latest_unlocker_log" ]; then

|

||||

log "Error: No unlocker log file found. Please check the service status."

|

||||

log "Error: No new unlocker log file found after starting services. Please check the service status."

|

||||

exit 1

|

||||

fi

|

||||

|

||||

# Get the initial file size instead of line count

|

||||

initial_size=$(stat -c %s "$latest_unlocker_log")

|

||||

|

||||

# Wait for new wallet status in log file

|

||||

# TODO: This wallet status polling is temporary; move to querying via the management port eventually.

|

||||

# Now that we have the correct log file, wait for the wallet status message

|

||||

START_TIME=$(date +%s)

|

||||

while [ $(($(date +%s) - START_TIME)) -lt $MAX_WAIT_TIME ]; do

|

||||

current_size=$(stat -c %s "$latest_unlocker_log")

|

||||

if [ $current_size -gt $initial_size ]; then

|

||||

latest_entry=$(tail -c +$((initial_size + 1)) "$latest_unlocker_log" | grep -E "unlocker >> (the wallet is already unlocked|created wallet with pub|unlocked wallet with pub)" | tail -n 1)

|

||||

latest_entry=$(grep -E "unlocker >> (the wallet is already unlocked|created wallet with pub:|unlocked wallet with pub)" "$latest_unlocker_log" | tail -n 1)

|

||||

|

||||

# Show dynamic header sync progress if available from unlocker logs

|

||||

progress_line=$(grep -E "LND header sync [0-9]+% \(height=" "$latest_unlocker_log" | tail -n 1)

|

||||

if [ -n "$progress_line" ]; then

|

||||

percent=$(echo "$progress_line" | sed -E 's/.*LND header sync ([0-9]+)%.*/\1/')

|

||||

if [[ "$percent" =~ ^[0-9]+$ ]]; then

|

||||

bar_len=30

|

||||

filled=$((percent*bar_len/100))

|

||||

if [ $filled -gt $bar_len ]; then filled=$bar_len; fi

|

||||

empty=$((bar_len-filled))

|

||||

filled_bar=$(printf '%*s' "$filled" | tr ' ' '#')

|

||||

empty_bar=$(printf '%*s' "$empty" | tr ' ' ' ')

|

||||

echo -ne "Header sync: [${filled_bar}${empty_bar}] ${percent}%\r"

|

||||

fi

|

||||

fi

|

||||

if [ -n "$latest_entry" ]; then

|

||||

bar_len=30

|

||||

filled=$bar_len

|

||||

empty=0

|

||||

filled_bar=$(printf '%*s' "$filled" | tr ' ' '#')

|

||||

empty_bar=$(printf '%*s' "$empty" | tr ' ' ' ')

|

||||

echo -ne "Header sync: [${filled_bar}${empty_bar}] 100%\r"

|

||||

# End the progress line cleanly

|

||||

echo ""

|

||||

break

|

||||

fi

|

||||

fi

|

||||

initial_size=$current_size

|

||||

sleep $WAIT_INTERVAL

|

||||

done

|

||||

|

||||

log "Checking wallet status... This may take a moment."

|

||||

|

||||

if [ -z "$latest_entry" ]; then

|

||||

log "Can't retrieve wallet status, check the service logs."

|

||||

exit 1

|

||||

|

|

@ -71,12 +105,10 @@ get_log_info() {

|

|||

admin_connect=$(cat "$DATA_DIR/admin.connect")

|

||||

# Check if the admin_connect string is complete (contains both nprofile and secret)

|

||||

if [[ $admin_connect == nprofile* ]] && [[ $admin_connect == *:* ]]; then

|

||||

log "An admin has not yet been enrolled."

|

||||

log "Paste this string into ShockWallet to administer the node:"

|

||||

log "A node admin has not yet enrolled via Nostr."

|

||||

log "Paste this string into ShockWallet as a node source to connect as administrator:"

|

||||

log "${SECONDARY_COLOR}$admin_connect${RESET_COLOR}"

|

||||

break

|

||||

else

|

||||

log "Waiting for complete admin connect information..."

|

||||

fi

|

||||

elif [ -f "$DATA_DIR/app.nprofile" ]; then

|

||||

app_nprofile=$(cat "$DATA_DIR/app.nprofile")

|

||||

|

|

|

|||

|

|

@ -1,24 +1,23 @@

|

|||

#!/bin/bash

|

||||

set -e

|

||||

|

||||

LOG_FILE="/var/log/pubdeploy.log"

|

||||

|

||||

touch $LOG_FILE

|

||||

chmod 644 $LOG_FILE

|

||||

# --- Use a secure temporary log file during installation ---

|

||||

TMP_LOG_FILE=$(mktemp)

|

||||

|

||||

log() {

|

||||

local message="$(date '+%Y-%m-%d %H:%M:%S') $1"

|

||||

echo -e "$message"

|

||||

echo -e "$(echo $message | sed 's/\\e\[[0-9;]*m//g')" >> $LOG_FILE

|

||||

# Write to the temporary log file.

|

||||

echo -e "$(echo "$message" | sed 's/\\e\[[0-9;]*m//g')" >> "$TMP_LOG_FILE"

|

||||

}

|

||||

|

||||

SCRIPT_VERSION="0.1.0"

|

||||

REPO_URL="https://github.com/shocknet/Lightning.Pub/tarball/master"

|

||||

BASE_URL="https://raw.githubusercontent.com/shocknet/Lightning.Pub/master/scripts/"

|

||||

SCRIPT_VERSION="0.2.0"

|

||||

REPO="shocknet/Lightning.Pub"

|

||||

BRANCH="master"

|

||||

|

||||

cleanup() {

|

||||

echo "Cleaning up temporary files..."

|

||||

rm -f /tmp/*.sh

|

||||

log "Cleaning up temporary files..."

|

||||

rm -rf "$TMP_DIR" 2>/dev/null || true

|

||||

}

|

||||

|

||||

trap cleanup EXIT

|

||||

|

|

@ -32,10 +31,10 @@ log_error() {

|

|||

|

||||

modules=(

|

||||

"utils"

|

||||

"check_homebrew"

|

||||

"install_rsync_mac"

|

||||

"create_launchd_plist"

|

||||

"start_services_mac"

|

||||

"check_homebrew" # NOTE: Used for macOS, which is untested/unsupported

|

||||

"install_rsync_mac" # NOTE: Used for macOS, which is untested/unsupported

|

||||

"create_launchd_plist" # NOTE: Used for macOS, which is untested/unsupported

|

||||

"start_services_mac" # NOTE: Used for macOS, which is untested/unsupported

|

||||

"install_lnd"

|

||||

"install_nodejs"

|

||||

"install_lightning_pub"

|

||||

|

|

@ -45,12 +44,52 @@ modules=(

|

|||

|

||||

log "Script version $SCRIPT_VERSION"

|

||||

|

||||

# Parse args for branch override

|

||||

while [[ $# -gt 0 ]]; do

|

||||

case $1 in

|

||||

--branch=*)

|

||||

BRANCH="${1#*=}"

|

||||

shift

|

||||

;;

|

||||

--branch)

|

||||

BRANCH="$2"

|

||||

shift 2

|

||||

;;

|

||||

*)

|

||||

shift

|

||||

;;

|

||||

esac

|

||||

done

|

||||

|

||||

BASE_URL="https://raw.githubusercontent.com/${REPO}/${BRANCH}"

|

||||

REPO_URL="https://github.com/${REPO}/tarball/${BRANCH}"

|

||||

SCRIPTS_URL="${BASE_URL}/scripts/"

|

||||

|

||||

TMP_DIR=$(mktemp -d)

|

||||

|

||||

for module in "${modules[@]}"; do

|

||||

wget -q "${BASE_URL}/${module}.sh" -O "/tmp/${module}.sh" || log_error "Failed to download ${module}.sh" 1

|

||||

source "/tmp/${module}.sh" || log_error "Failed to source ${module}.sh" 1

|

||||

wget -q "${SCRIPTS_URL}${module}.sh" -O "${TMP_DIR}/${module}.sh" || log_error "Failed to download ${module}.sh" 1

|

||||

source "${TMP_DIR}/${module}.sh" || log_error "Failed to source ${module}.sh" 1

|

||||

done

|

||||

|

||||

detect_os_arch

|

||||

|

||||

# Define installation paths based on user

|

||||

if [ "$(id -u)" -eq 0 ]; then

|

||||

IS_ROOT=true

|

||||

# For root, install under /opt for system-wide access

|

||||

export INSTALL_DIR="/opt/lightning_pub"

|

||||

export UNIT_DIR="/etc/systemd/system"

|

||||

export SYSTEMCTL_CMD="systemctl"

|

||||

log "Running as root: App will be installed in $INSTALL_DIR"

|

||||

else

|

||||

IS_ROOT=false

|

||||

export INSTALL_DIR="$HOME/lightning_pub"

|

||||

export UNIT_DIR="$HOME/.config/systemd/user"

|

||||

export SYSTEMCTL_CMD="systemctl --user"

|

||||

fi

|

||||

|

||||

check_deps

|

||||

log "Detected OS: $OS"

|

||||

log "Detected ARCH: $ARCH"

|

||||

|

||||

|

|

@ -58,6 +97,8 @@ if [ "$OS" = "Mac" ]; then

|

|||

log "Handling macOS specific setup"

|

||||

handle_macos || log_error "macOS setup failed" 1

|

||||

else

|

||||

# Explicit kickoff log for LND so the flow is clear in the install log

|

||||

log "${PRIMARY_COLOR}Installing${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR}..."

|

||||

lnd_output=$(install_lnd)

|

||||

install_result=$?

|

||||

|

||||

|

|

@ -76,11 +117,48 @@ else

|

|||

|

||||

install_nodejs || log_error "Failed to install Node.js" 1

|

||||

|

||||

install_lightning_pub "$REPO_URL" || log_error "Failed to install Lightning.Pub" 1

|

||||

# Run install_lightning_pub and capture its exit code directly.

|

||||

# Exit codes from install_lightning_pub: 0=fresh, 100=upgrade, 2=no-op

|

||||

install_lightning_pub "$REPO_URL" || pub_install_status=$?

|

||||

|

||||

case ${pub_install_status:-0} in

|

||||

0)

|

||||

log "Lightning.Pub fresh installation completed successfully."

|

||||

pub_upgrade_status=0 # Indicates a fresh install, services should start

|

||||

;;

|

||||

100)

|

||||

log "Lightning.Pub upgrade completed successfully."

|

||||

pub_upgrade_status=100 # Indicates an upgrade, services should restart

|

||||

;;

|

||||

2)

|

||||

log "Lightning.Pub is already up-to-date. No action needed."

|

||||

pub_upgrade_status=2 # Special status to skip service restart

|

||||

;;

|

||||

*)

|

||||

log_error "Lightning.Pub installation failed with exit code $pub_install_status" "$pub_install_status"

|

||||

;;

|

||||

esac

|

||||

|

||||

# Only start services if it was a fresh install or an upgrade.

|

||||

if [ "$pub_upgrade_status" -eq 0 ] || [ "$pub_upgrade_status" -eq 100 ]; then

|

||||

log "Starting services..."

|

||||

if [ "$lnd_status" = "0" ] || [ "$lnd_status" = "1" ]; then

|

||||

log "Note: LND may take several minutes to sync block headers depending on network conditions."

|

||||

fi

|

||||

TIMESTAMP_FILE=$(mktemp)

|

||||

export TIMESTAMP_FILE

|

||||

start_services $lnd_status $pub_upgrade_status || log_error "Failed to start services" 1

|

||||

get_log_info || log_error "Failed to get log info" 1

|

||||

fi

|

||||

|

||||

log "Installation process completed successfully"

|

||||

|

||||

# --- Move temporary log to permanent location ---

|

||||

if [ -d "$HOME/lightning_pub" ]; then

|

||||

mv "$TMP_LOG_FILE" "$HOME/lightning_pub/install.log"

|

||||

chmod 600 "$HOME/lightning_pub/install.log"

|

||||

else

|

||||

# If the installation failed before the dir was created, clean up the temp log.

|

||||

rm -f "$TMP_LOG_FILE"

|

||||

fi

|

||||

fi

|

||||

|

|

@ -2,6 +2,11 @@

|

|||

|

||||

install_lightning_pub() {

|

||||

local REPO_URL="$1"

|

||||

# Defined exit codes for this function:

|

||||

# 0: Fresh install success (triggers service start)

|

||||

# 100: Upgrade success (triggers service restart)

|

||||

# 2: No-op (already up-to-date, skip services)

|

||||

# Other: Error

|

||||

local upgrade_status=0

|

||||

|

||||

if [ -z "$REPO_URL" ]; then

|

||||

|

|

@ -9,53 +14,93 @@ install_lightning_pub() {

|

|||

return 1

|

||||

fi

|

||||

|

||||

if [ "$EUID" -eq 0 ]; then

|

||||

USER_HOME=$(getent passwd ${SUDO_USER} | cut -d: -f6)

|

||||

USER_NAME=$SUDO_USER

|

||||

else

|

||||

local EXTRACT_DIR=$(mktemp -d)

|

||||

|

||||

USER_HOME=$HOME

|

||||

USER_NAME=$(whoami)

|

||||

fi

|

||||

|

||||

log "${PRIMARY_COLOR}Installing${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR}..."

|

||||

|

||||

sudo -u $USER_NAME wget -q $REPO_URL -O $USER_HOME/lightning_pub.tar.gz > /dev/null 2>&1 || {

|

||||

wget -q $REPO_URL -O $USER_HOME/lightning_pub.tar.gz > /dev/null 2>&1 || {

|

||||

log "${PRIMARY_COLOR}Failed to download Lightning.Pub.${RESET_COLOR}"

|

||||

return 1

|

||||

}

|

||||

|

||||

sudo -u $USER_NAME mkdir -p $USER_HOME/lightning_pub_temp

|

||||

sudo -u $USER_NAME tar -xzf $USER_HOME/lightning_pub.tar.gz -C $USER_HOME/lightning_pub_temp --strip-components=1 > /dev/null 2>&1 || {

|

||||

tar -xzf $USER_HOME/lightning_pub.tar.gz -C "$EXTRACT_DIR" --strip-components=1 > /dev/null 2>&1 || {

|

||||

log "${PRIMARY_COLOR}Failed to extract Lightning.Pub.${RESET_COLOR}"

|

||||

rm -rf "$EXTRACT_DIR"

|

||||

return 1

|

||||

}

|

||||

rm $USER_HOME/lightning_pub.tar.gz

|

||||

|

||||

if [ -d "$USER_HOME/lightning_pub" ]; then

|

||||

log "Upgrading existing Lightning.Pub installation..."

|

||||

upgrade_status=100 # Use 100 to indicate an upgrade

|

||||

else

|

||||

log "Performing fresh Lightning.Pub installation..."

|

||||

upgrade_status=0

|

||||

# Decide flow based on whether a valid previous installation exists.

|

||||

if [ -f "$INSTALL_DIR/.installed_commit" ] || [ -f "$INSTALL_DIR/db.sqlite" ]; then

|

||||

# --- UPGRADE PATH ---

|

||||

log "Existing installation found. Checking for updates..."

|

||||

|

||||

# Check if update is needed by comparing commit hashes

|

||||

API_RESPONSE=$(wget -qO- "https://api.github.com/repos/${REPO}/commits/${BRANCH}" 2>&1 | tee /tmp/api_response.log)

|

||||

if grep -q '"message"[[:space:]]*:[[:space:]]*"API rate limit exceeded"' <<< "$API_RESPONSE"; then

|

||||

log_error "GitHub API rate limit exceeded. Please wait a while before trying again." 1

|

||||

fi

|

||||

LATEST_COMMIT=$(echo "$API_RESPONSE" | awk -F'[/"]' '/"html_url": ".*\/commit\// {print $(NF-1); exit}')

|

||||

if [ -z "$LATEST_COMMIT" ]; then

|

||||

log_error "Could not retrieve latest version from GitHub. Upgrade check failed. Aborting." 1

|

||||

fi

|

||||

|

||||

# Merge if upgrade

|

||||

if [ $upgrade_status -eq 100 ]; then

|

||||

rsync -a --quiet --exclude='*.sqlite' --exclude='.env' --exclude='logs' --exclude='node_modules' --exclude='.jwt_secret' --exclude='.wallet_secret' --exclude='admin.npub' --exclude='app.nprofile' --exclude='admin.connect' --exclude='admin.enroll' $USER_HOME/lightning_pub_temp/ $USER_HOME/lightning_pub/

|

||||

else

|

||||

mv $USER_HOME/lightning_pub_temp $USER_HOME/lightning_pub

|

||||

CURRENT_COMMIT=$(cat "$INSTALL_DIR/.installed_commit" 2>/dev/null | head -c 40)

|

||||

if [ "$CURRENT_COMMIT" = "$LATEST_COMMIT" ]; then

|

||||

log "${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} is already at the latest commit. No update needed."

|

||||

rm -rf "$EXTRACT_DIR"

|

||||

return 2

|

||||

fi

|

||||

rm -rf $USER_HOME/lightning_pub_temp

|

||||

|

||||

log "${PRIMARY_COLOR}Upgrading${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} installation..."

|

||||

upgrade_status=100

|

||||

|

||||

# Stop the service if running to avoid rug-pull during backup and file replacement

|

||||

was_running=false

|

||||

if [ "$SYSTEMCTL_CMD" = "systemctl --user" ] && systemctl --user is-active --quiet lightning_pub 2>/dev/null; then

|

||||

log "Stopping Lightning.Pub service before upgrade..."

|

||||

systemctl --user stop lightning_pub

|

||||

was_running=true

|

||||

elif [ "$SYSTEMCTL_CMD" = "systemctl" ] && systemctl is-active --quiet lightning_pub 2>/dev/null; then

|

||||

log "Stopping Lightning.Pub service before upgrade..."

|

||||

systemctl stop lightning_pub

|

||||

was_running=true

|

||||

fi

|

||||

|

||||

log "Backing up user data before upgrade..."

|

||||

BACKUP_DIR=$(mktemp -d)

|

||||

mv "$INSTALL_DIR" "$BACKUP_DIR"

|

||||

BACKUP_DIR="$BACKUP_DIR/$(basename "$INSTALL_DIR")"

|

||||

|

||||

log "Installing latest version..."

|

||||

|

||||

mv "$EXTRACT_DIR" "$INSTALL_DIR"

|

||||

|

||||

elif [ -d "$INSTALL_DIR" ]; then

|

||||

# --- CONFLICT/UNSAFE PATH ---

|

||||

# This handles the case where the directory exists but is not a valid install (e.g., a git clone).

|

||||

log_error "Directory '$INSTALL_DIR' already exists but does not appear to be a valid installation. For your safety, please manually back up and remove this directory, then run the installer again." 1

|

||||

|

||||

else

|

||||

# --- FRESH INSTALL PATH ---

|

||||

# This path is only taken if the ~/lightning_pub directory does not exist.

|

||||

log "${PRIMARY_COLOR}Installing${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR}..."

|

||||

upgrade_status=0

|

||||

mkdir -p "$(dirname "$INSTALL_DIR")"

|

||||

mv "$EXTRACT_DIR" "$INSTALL_DIR"

|

||||

fi

|

||||

|

||||

|

||||

# Load nvm and npm

|

||||

export NVM_DIR="${NVM_DIR}"

|

||||

[ -s "${NVM_DIR}/nvm.sh" ] && \. "${NVM_DIR}/nvm.sh"

|

||||

|

||||

cd $USER_HOME/lightning_pub

|

||||

cd "$INSTALL_DIR"

|

||||

|

||||

log "${PRIMARY_COLOR}Installing${RESET_COLOR} npm dependencies..."

|

||||

|

||||

npm install > npm_install.log 2>&1

|

||||

npm install --no-optional --fallback-to-build=false > npm_install.log 2>&1

|

||||

npm_exit_code=$?

|

||||

|

||||

if [ $npm_exit_code -ne 0 ]; then

|

||||

|

|

@ -63,9 +108,61 @@ install_lightning_pub() {

|

|||

tail -n 20 npm_install.log | while IFS= read -r line; do

|

||||

log " $line"

|

||||

done

|

||||

log "${PRIMARY_COLOR}Full log available in $USER_HOME/lightning_pub/npm_install.log${RESET_COLOR}"

|

||||

log "${PRIMARY_COLOR}Full log available in $INSTALL_DIR/npm_install.log${RESET_COLOR}"

|

||||

|

||||

log "Restoring previous installation due to upgrade failure..."

|

||||

rm -rf "$INSTALL_DIR"

|

||||

mv "$BACKUP_DIR" "$INSTALL_DIR"

|

||||

log "Backup remnant at $BACKUP_DIR for manual review but may auto-clean on reboot."

|

||||

|

||||

if [ "$was_running" = true ]; then

|

||||

log "Restarting Lightning.Pub service after restore."

|

||||

$SYSTEMCTL_CMD start lightning_pub

|

||||

fi

|

||||

|

||||

return 1

|

||||

fi

|

||||

|

||||

return 0

|

||||

if [ "$upgrade_status" -eq 100 ]; then

|

||||

# Restore user data AFTER successful NPM install

|

||||

log "Restoring user data..."

|

||||

cp "$BACKUP_DIR"/*.sqlite "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/.env "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp -r "$BACKUP_DIR"/logs "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp -r "$BACKUP_DIR"/metric_cache "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/.jwt_secret "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/.wallet_secret "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/.installed_commit "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/admin.npub "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/app.nprofile "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/admin.connect "$INSTALL_DIR/" 2>/dev/null || true

|

||||

cp "$BACKUP_DIR"/admin.enroll "$INSTALL_DIR/" 2>/dev/null || true

|

||||

|

||||

# Ensure correct ownership post-restore (fixes potential mismatches)

|

||||

chown -R "$USER_NAME:$USER_NAME" "$INSTALL_DIR/" 2>/dev/null || true

|

||||

# Secure DB files (as before)

|

||||

chmod 600 "$INSTALL_DIR/db.sqlite" 2>/dev/null || true

|

||||

chmod 600 "$INSTALL_DIR/metrics.sqlite" 2>/dev/null || true

|

||||

chmod 600 "$INSTALL_DIR/.jwt_secret" 2>/dev/null || true

|

||||

chmod 600 "$INSTALL_DIR/.wallet_secret" 2>/dev/null || true

|

||||

chmod 600 "$INSTALL_DIR/admin.connect" 2>/dev/null || true

|

||||

chmod 600 "$INSTALL_DIR/admin.enroll" 2>/dev/null || true

|

||||

# Ensure log/metric dirs are writable (dirs need execute for traversal)

|

||||

chmod 755 "$INSTALL_DIR/logs" 2>/dev/null || true

|

||||

chmod 755 "$INSTALL_DIR/logs/"*/ 2>/dev/null || true # Subdirs like apps/

|

||||

chmod 755 "$INSTALL_DIR/metric_cache" 2>/dev/null || true

|

||||

fi

|

||||

|

||||

# Store the commit hash for future update checks

|

||||

# Note: LATEST_COMMIT will be empty on a fresh install, which is fine.

|

||||

# The file will be created, and the next run will be an upgrade.

|

||||

if [ -n "$LATEST_COMMIT" ]; then

|

||||

echo "$LATEST_COMMIT" > "$INSTALL_DIR/.installed_commit"

|

||||

else

|

||||

touch "$INSTALL_DIR/.installed_commit"

|

||||

fi

|

||||

|

||||

rm -rf "$BACKUP_DIR" 2>/dev/null || true

|

||||

|

||||

return $upgrade_status

|

||||

}

|

||||

|

|

@ -5,13 +5,8 @@ install_lnd() {

|

|||

|

||||

log "Starting LND installation/check process..."

|

||||

|

||||

if [ "$EUID" -eq 0 ]; then

|

||||

USER_HOME=$(getent passwd ${SUDO_USER} | cut -d: -f6)

|

||||

USER_NAME=$SUDO_USER

|

||||

else

|

||||

USER_HOME=$HOME

|

||||

USER_NAME=$(whoami)

|

||||

fi

|

||||

|

||||

log "Checking latest LND version..."

|

||||

LND_VERSION=$(wget -qO- https://api.github.com/repos/lightningnetwork/lnd/releases/latest | grep -oP '"tag_name": "\K(.*)(?=")')

|

||||

|

|

@ -54,41 +49,57 @@ install_lnd() {

|

|||

log "${PRIMARY_COLOR}Downloading${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR}..."

|

||||

|

||||

# Start the download

|

||||

sudo -u $USER_NAME wget -q $LND_URL -O $USER_HOME/lnd.tar.gz || {

|

||||

wget -q $LND_URL -O $USER_HOME/lnd.tar.gz || {

|

||||

log "${PRIMARY_COLOR}Failed to download LND.${RESET_COLOR}"

|

||||

exit 1

|

||||

}

|

||||

|

||||

# Check if LND is already running and stop it if necessary (Linux)

|

||||

if [ "$OS" = "Linux" ] && [ "$SYSTEMCTL_AVAILABLE" = true ]; then

|

||||

if systemctl is-active --quiet lnd; then

|

||||

log "${PRIMARY_COLOR}Stopping${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR} service..."

|

||||

sudo systemctl stop lnd

|

||||

# Check if LND is already running and stop it if necessary (user-space)

|

||||

if [ "$OS" = "Linux" ] && command -v systemctl >/dev/null 2>&1; then

|

||||

if systemctl --user is-active --quiet lnd 2>/dev/null; then

|

||||

log "${PRIMARY_COLOR}Stopping${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR} user service..."

|

||||

systemctl --user stop lnd

|

||||

fi

|

||||

else

|

||||

log "${PRIMARY_COLOR}systemctl not found. Please stop ${SECONDARY_COLOR}LND${RESET_COLOR} manually if it is running.${RESET_COLOR}"

|

||||

log "${PRIMARY_COLOR}Please stop ${SECONDARY_COLOR}LND${RESET_COLOR} manually if it is running.${RESET_COLOR}"

|

||||

fi

|

||||

|

||||

sudo -u $USER_NAME tar -xzf $USER_HOME/lnd.tar.gz -C $USER_HOME > /dev/null || {

|

||||

log "Extracting LND..."

|

||||

LND_TMP_DIR=$(mktemp -d -p "$USER_HOME")

|

||||

|

||||

tar -xzf "$USER_HOME/lnd.tar.gz" -C "$LND_TMP_DIR" --strip-components=1 > /dev/null || {

|

||||

log "${PRIMARY_COLOR}Failed to extract LND.${RESET_COLOR}"

|

||||

rm -rf "$LND_TMP_DIR"

|

||||

rm -f "$USER_HOME/lnd.tar.gz"

|

||||

exit 1

|

||||

}

|

||||

|

||||

rm "$USER_HOME/lnd.tar.gz"

|

||||

|

||||

if [ -d "$USER_HOME/lnd" ]; then

|

||||

log "Removing old LND directory..."

|

||||

rm -rf "$USER_HOME/lnd"

|

||||

fi

|

||||

|

||||

mv "$LND_TMP_DIR" "$USER_HOME/lnd" || {

|

||||

log "${PRIMARY_COLOR}Failed to move new LND version into place.${RESET_COLOR}"

|

||||

exit 1

|

||||

}

|

||||

rm $USER_HOME/lnd.tar.gz

|

||||

sudo -u $USER_NAME mv $USER_HOME/lnd-* $USER_HOME/lnd

|

||||

|

||||

# Create .lnd directory if it doesn't exist

|

||||

sudo -u $USER_NAME mkdir -p $USER_HOME/.lnd

|

||||

mkdir -p $USER_HOME/.lnd

|

||||

|

||||

# Check if lnd.conf already exists and avoid overwriting it

|

||||

if [ -f $USER_HOME/.lnd/lnd.conf ]; then

|

||||

log "${PRIMARY_COLOR}lnd.conf already exists. Skipping creation of new lnd.conf file.${RESET_COLOR}"

|

||||

else

|

||||

sudo -u $USER_NAME bash -c "cat <<EOF > $USER_HOME/.lnd/lnd.conf

|

||||

cat <<EOF > $USER_HOME/.lnd/lnd.conf

|

||||

bitcoin.mainnet=true

|

||||

bitcoin.node=neutrino

|

||||

neutrino.addpeer=neutrino.shock.network

|

||||

feeurl=https://nodes.lightning.computer/fees/v1/btc-fee-estimates.json

|

||||

EOF"

|

||||

fee.url=https://nodes.lightning.computer/fees/v1/btc-fee-estimates.json

|

||||

EOF

|

||||

chmod 600 $USER_HOME/.lnd/lnd.conf

|

||||

fi

|

||||

|

||||

log "${SECONDARY_COLOR}LND${RESET_COLOR} installation and configuration completed."

|

||||

|

|

|

|||

|

|

@ -1,15 +1,10 @@

|

|||

#!/bin/bash

|

||||

|

||||

install_nodejs() {

|

||||

if [ "$EUID" -eq 0 ] && [ -n "$SUDO_USER" ]; then

|

||||

USER_HOME=$(getent passwd ${SUDO_USER} | cut -d: -f6)

|

||||

USER_NAME=${SUDO_USER}

|

||||

else

|

||||

USER_HOME=$HOME

|

||||

USER_NAME=$(whoami)

|

||||

fi

|

||||

|

||||

NVM_DIR="$USER_HOME/.nvm"

|

||||

export NVM_DIR="$USER_HOME/.nvm"

|

||||

log "${PRIMARY_COLOR}Checking${RESET_COLOR} for Node.js..."

|

||||

MINIMUM_VERSION="18.0.0"

|

||||

|

||||

|

|

@ -19,7 +14,7 @@ install_nodejs() {

|

|||

|

||||

if ! command -v nvm &> /dev/null; then

|

||||

NVM_VERSION=$(wget -qO- https://api.github.com/repos/nvm-sh/nvm/releases/latest | grep -oP '"tag_name": "\K(.*)(?=")')

|

||||

sudo -u $USER_NAME bash -c "wget -qO- https://raw.githubusercontent.com/nvm-sh/nvm/${NVM_VERSION}/install.sh | bash > /dev/null 2>&1"

|

||||

wget -qO- https://raw.githubusercontent.com/nvm-sh/nvm/${NVM_VERSION}/install.sh | bash > /dev/null 2>&1

|

||||

export NVM_DIR="${NVM_DIR}"

|

||||

[ -s "${NVM_DIR}/nvm.sh" ] && \. "${NVM_DIR}/nvm.sh"

|

||||

fi

|

||||

|

|

@ -36,7 +31,8 @@ install_nodejs() {

|

|||

log "Node.js is not installed. ${PRIMARY_COLOR}Installing the LTS version...${RESET_COLOR}"

|

||||

fi

|

||||

|

||||

if ! sudo -u $USER_NAME bash -c "source ${NVM_DIR}/nvm.sh && nvm install --lts"; then

|

||||

# Silence all nvm output to keep installer logs clean

|

||||

if ! bash -c "source ${NVM_DIR}/nvm.sh && nvm install --lts" >/dev/null 2>&1; then

|

||||

log "${PRIMARY_COLOR}Failed to install Node.js.${RESET_COLOR}"

|

||||

return 1

|

||||

fi

|

||||

|

|

|

|||

|

|

@ -4,117 +4,113 @@ start_services() {

|

|||

LND_STATUS=$1

|

||||

PUB_UPGRADE=$2

|

||||

|

||||

if [ "$EUID" -eq 0 ]; then

|

||||

USER_HOME=$(getent passwd ${SUDO_USER} | cut -d: -f6)

|

||||

USER_NAME=$SUDO_USER

|

||||

else

|

||||

USER_HOME=$HOME

|

||||

USER_NAME=$(whoami)

|

||||

|

||||

|

||||

# Ensure NVM_DIR is set

|

||||

if [ -z "$NVM_DIR" ]; then

|

||||

export NVM_DIR="$USER_HOME/.nvm"

|

||||

fi

|

||||

|

||||

if [ "$OS" = "Linux" ]; then

|

||||

if [ "$SYSTEMCTL_AVAILABLE" = true ]; then

|

||||

sudo bash -c "cat > /etc/systemd/system/lnd.service <<EOF

|

||||

[Unit]

|

||||

Description=LND Service

|

||||

After=network.target

|

||||

mkdir -p "$UNIT_DIR"

|

||||

|

||||

[Service]

|

||||

ExecStart=${USER_HOME}/lnd/lnd

|

||||

User=${USER_NAME}

|

||||

Restart=always

|

||||

|

||||

[Install]

|

||||

WantedBy=multi-user.target

|

||||

EOF"

|

||||

|

||||

sudo bash -c "cat > /etc/systemd/system/lightning_pub.service <<EOF

|

||||

[Unit]

|

||||

Description=Lightning.Pub Service

|

||||

After=network.target

|

||||

|

||||

[Service]

|

||||

ExecStart=/bin/bash -c 'source ${NVM_DIR}/nvm.sh && npm start'

|

||||

WorkingDirectory=${USER_HOME}/lightning_pub

|

||||

User=${USER_NAME}

|

||||

Restart=always

|

||||

|

||||

[Install]

|

||||

WantedBy=multi-user.target

|

||||

EOF"

|

||||

|

||||

sudo systemctl daemon-reload

|

||||

sudo systemctl enable lnd >/dev/null 2>&1

|

||||

sudo systemctl enable lightning_pub >/dev/null 2>&1

|

||||

|

||||

# Always attempt to start or restart LND

|

||||

if systemctl is-active --quiet lnd; then

|

||||

if [ "$LND_STATUS" = "1" ]; then

|

||||

log "${PRIMARY_COLOR}Restarting${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR} service..."

|

||||

sudo systemctl restart lnd

|

||||

else

|

||||

log "${SECONDARY_COLOR}LND${RESET_COLOR} service is already running."

|

||||

# Check and create lnd.service if needed (only if it doesn't exist)

|

||||

LND_UNIT="$UNIT_DIR/lnd.service"

|

||||

if [ ! -f "$LND_UNIT" ]; then

|

||||

NEW_LND_CONTENT="[Unit]\nDescription=LND Service\nAfter=network.target\n\n[Service]\nExecStart=${USER_HOME}/lnd/lnd\nRestart=always\n\n[Install]\nWantedBy=default.target"

|

||||

echo -e "$NEW_LND_CONTENT" > "$LND_UNIT"

|

||||

$SYSTEMCTL_CMD daemon-reload

|

||||

$SYSTEMCTL_CMD enable lnd >/dev/null 2>&1

|

||||

fi

|

||||

|

||||

# Check and create lightning_pub.service if needed (only if it doesn't exist)

|

||||

PUB_UNIT="$UNIT_DIR/lightning_pub.service"

|

||||

if [ ! -f "$PUB_UNIT" ]; then

|

||||

NEW_PUB_CONTENT="[Unit]\nDescription=Lightning.Pub Service\nAfter=network.target\n\n[Service]\nExecStart=/bin/bash -c 'source ${NVM_DIR}/nvm.sh && npm start'\nWorkingDirectory=${INSTALL_DIR}\nRestart=always\n\n[Install]\nWantedBy=default.target"

|

||||

echo -e "$NEW_PUB_CONTENT" > "$PUB_UNIT"

|

||||

$SYSTEMCTL_CMD daemon-reload

|

||||

$SYSTEMCTL_CMD enable lightning_pub >/dev/null 2>&1

|

||||

fi

|

||||

|

||||

# Start/restart LND if it was freshly installed or upgraded

|

||||

if [ "$LND_STATUS" = "0" ] || [ "$LND_STATUS" = "1" ]; then

|

||||

if $SYSTEMCTL_CMD is-active --quiet lnd; then

|

||||

log "${PRIMARY_COLOR}Restarting${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR} service..."

|

||||

$SYSTEMCTL_CMD restart lnd

|

||||

else

|

||||

log "${PRIMARY_COLOR}Starting${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR} service..."

|

||||

sudo systemctl start lnd

|

||||

$SYSTEMCTL_CMD start lnd

|

||||

fi

|

||||

|

||||

# Check LND status after attempting to start/restart

|

||||

if ! systemctl is-active --quiet lnd; then

|

||||

if ! $SYSTEMCTL_CMD is-active --quiet lnd; then

|

||||

log "Failed to start or restart ${SECONDARY_COLOR}LND${RESET_COLOR}. Please check the logs."

|

||||

exit 1

|

||||

fi

|

||||

|

||||

log "Giving ${SECONDARY_COLOR}LND${RESET_COLOR} a few seconds to start before starting ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR}..."

|

||||

sleep 10

|

||||

|

||||

# Always attempt to start or restart Lightning.Pub

|

||||

if systemctl is-active --quiet lightning_pub; then

|

||||

if [ "$PUB_UPGRADE" = "100" ]; then

|

||||

log "${PRIMARY_COLOR}Restarting${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} service..."

|

||||

sudo systemctl restart lightning_pub

|

||||

else

|

||||

log "${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} service is already running."

|

||||

fi

|

||||

else

|

||||

log "${PRIMARY_COLOR}Starting${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} service..."

|

||||

sudo systemctl start lightning_pub

|

||||

fi

|

||||

|

||||

# Check Lightning.Pub status after attempting to start/restart

|

||||

if ! systemctl is-active --quiet lightning_pub; then

|

||||

log "Failed to start or restart ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR}. Please check the logs."

|

||||

# Edge case: LND not updated but may be installed and not yet running.

|

||||

# If we're about to start Pub, ensure LND is running; otherwise Pub will fail.

|

||||

if [ "$PUB_UPGRADE" = "0" ] || [ "$PUB_UPGRADE" = "100" ]; then

|

||||

if ! $SYSTEMCTL_CMD is-active --quiet lnd; then

|

||||

log "${PRIMARY_COLOR}Starting${RESET_COLOR} ${SECONDARY_COLOR}LND${RESET_COLOR} service..."

|

||||

$SYSTEMCTL_CMD start lnd

|

||||

if ! $SYSTEMCTL_CMD is-active --quiet lnd; then

|

||||

log "Failed to start ${SECONDARY_COLOR}LND${RESET_COLOR}. Please check the logs."

|

||||

exit 1

|

||||

fi

|

||||

else

|

||||

log "${SECONDARY_COLOR}LND${RESET_COLOR} already running."

|

||||

fi

|

||||

fi

|

||||

fi

|

||||

|

||||

if [ "$PUB_UPGRADE" = "0" ] || [ "$PUB_UPGRADE" = "100" ]; then

|

||||

if [ "$PUB_UPGRADE" = "100" ]; then

|

||||

log "${PRIMARY_COLOR}Restarting${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} service after upgrade..."

|

||||

else

|

||||

log "${PRIMARY_COLOR}Starting${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} service..."

|

||||

fi

|

||||

$SYSTEMCTL_CMD start lightning_pub

|

||||

fi

|

||||

|

||||

# Check Lightning.Pub status after attempting to start

|

||||

if [ "$PUB_UPGRADE" = "0" ] || [ "$PUB_UPGRADE" = "100" ]; then

|

||||

SERVICE_ACTIVE=false

|

||||

for i in {1..15}; do

|

||||

if $SYSTEMCTL_CMD is-active --quiet lightning_pub; then

|

||||

SERVICE_ACTIVE=true

|

||||

break

|

||||

fi

|

||||

# Check for failed state to exit early

|

||||

if $SYSTEMCTL_CMD is-failed --quiet lightning_pub; then

|

||||

break

|

||||

fi

|

||||

sleep 1

|

||||

done

|

||||

|

||||

if [ "$SERVICE_ACTIVE" = false ]; then

|

||||

log "${PRIMARY_COLOR}ERROR:${RESET_COLOR} ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} service failed to start. Recent logs:"

|

||||

if [ "$IS_ROOT" = true ]; then

|

||||

journalctl -u lightning_pub.service -n 20 --no-pager | while IFS= read -r line; do log " $line"; done

|

||||

else

|

||||

journalctl --user-unit lightning_pub.service -n 20 --no-pager | while IFS= read -r line; do log " $line"; done

|

||||

fi

|

||||

log_error "Service startup failed." 1

|

||||

fi

|

||||

fi

|

||||

|

||||

else

|

||||

create_start_script

|

||||

log "systemctl not available. Created start.sh. Please use this script to start the services manually."

|

||||

log "systemctl not available. Please start the services manually (e.g., run lnd and npm start in separate terminals)."

|

||||

fi

|

||||

elif [ "$OS" = "Mac" ]; then

|

||||

log "macOS detected. Please configure launchd manually to start ${SECONDARY_COLOR}LND${RESET_COLOR} and ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} at startup."

|

||||

create_start_script

|

||||

# NOTE: macOS support is untested and unsupported. Use at your own risk. (restore)

|

||||

log "macOS detected. Please configure launchd manually..."

|

||||

elif [ "$OS" = "Cygwin" ] || [ "$OS" = "MinGw" ]; then

|

||||

log "Windows detected. Please configure your startup scripts manually to start ${SECONDARY_COLOR}LND${RESET_COLOR} and ${SECONDARY_COLOR}Lightning.Pub${RESET_COLOR} at startup."

|

||||

create_start_script

|

||||

else

|

||||

log "Unsupported OS detected. Please configure your startup scripts manually."

|

||||

create_start_script

|

||||

fi

|

||||

}

|

||||

|

||||

create_start_script() {

|

||||

cat <<EOF > start.sh

|

||||

#!/bin/bash

|

||||

${USER_HOME}/lnd/lnd &

|

||||

LND_PID=\$!

|

||||

sleep 10

|

||||

npm start &

|

||||

NODE_PID=\$!

|

||||

wait \$LND_PID

|

||||

wait \$NODE_PID

|

||||

EOF

|

||||

chmod +x start.sh

|

||||

log "systemctl not available. Created start.sh. Please use this script to start the services manually."

|

||||

}

|

||||

|

|

@ -27,3 +27,12 @@ detect_os_arch() {

|

|||

SYSTEMCTL_AVAILABLE=false

|

||||

fi

|

||||

}

|

||||

|

||||

check_deps() {

|

||||

for cmd in wget grep stat tar sha256sum; do

|

||||

if ! command -v $cmd &> /dev/null; then

|

||||

log "Missing system dependency: $cmd. Install $cmd via your package manager and retry."

|

||||

exit 1

|

||||

fi

|

||||

done

|

||||

}

|

||||

|

|

@ -17,9 +17,10 @@ export const LoadLndSettingsFromEnv = (): LndSettings => {

|

|||

const lndAddr = process.env.LND_ADDRESS || "127.0.0.1:10009"

|

||||

const lndCertPath = process.env.LND_CERT_PATH || resolveHome("/.lnd/tls.cert")

|

||||

const lndMacaroonPath = process.env.LND_MACAROON_PATH || resolveHome("/.lnd/data/chain/bitcoin/mainnet/admin.macaroon")

|

||||

const lndLogDir = process.env.LND_LOG_DIR || resolveHome("/.lnd/logs/bitcoin/mainnet/lnd.log")

|

||||

const feeRateBps = EnvCanBeInteger("OUTBOUND_MAX_FEE_BPS", 60)

|

||||

const feeRateLimit = feeRateBps / 10000

|

||||

const feeFixedLimit = EnvCanBeInteger("OUTBOUND_MAX_FEE_EXTRA_SATS", 100)

|

||||

const mockLnd = EnvCanBeBoolean("MOCK_LND")

|

||||

return { mainNode: { lndAddr, lndCertPath, lndMacaroonPath }, feeRateLimit, feeFixedLimit, feeRateBps, mockLnd }

|

||||

return { mainNode: { lndAddr, lndCertPath, lndMacaroonPath }, lndLogDir, feeRateLimit, feeFixedLimit, feeRateBps, mockLnd }

|

||||

}

|

||||

|

|

|

|||

|

|

@ -7,6 +7,7 @@ export type NodeSettings = {

|

|||

}

|

||||

export type LndSettings = {

|

||||

mainNode: NodeSettings

|

||||

lndLogDir: string

|

||||

feeRateLimit: number

|

||||

feeFixedLimit: number

|

||||

feeRateBps: number

|

||||

|

|

@ -15,6 +16,7 @@ export type LndSettings = {

|

|||

otherNode?: NodeSettings

|

||||

thirdNode?: NodeSettings

|

||||

}

|

||||

|

||||

type TxOutput = {

|

||||

hash: string

|

||||

index: number

|

||||

|

|

|

|||

|

|

@ -28,6 +28,12 @@ export class AdminManager {

|

|||

this.adminNpubPath = getDataPath(this.dataDir, 'admin.npub')

|

||||

this.adminEnrollTokenPath = getDataPath(this.dataDir, 'admin.enroll')

|

||||

this.adminConnectPath = getDataPath(this.dataDir, 'admin.connect')

|

||||

this.log("AdminManager configured with paths:", {

|

||||

dataDir: this.dataDir || process.cwd(),

|

||||

adminNpubPath: this.adminNpubPath,

|

||||

adminEnrollTokenPath: this.adminEnrollTokenPath,

|

||||

adminConnectPath: this.adminConnectPath

|

||||

})

|

||||

this.appNprofilePath = getDataPath(this.dataDir, 'app.nprofile')

|

||||

this.start()

|

||||

}

|

||||

|

|

@ -43,6 +49,7 @@ export class AdminManager {

|

|||

if (enrollToken) {

|

||||

const connectString = `${this.appNprofile}:${enrollToken}`

|

||||

fs.writeFileSync(this.adminConnectPath, connectString)

|

||||

fs.chmodSync(this.adminConnectPath, 0o600)

|

||||

}

|

||||

}

|

||||

Stop = () => {

|

||||

|

|

@ -52,8 +59,11 @@ export class AdminManager {

|

|||

GenerateAdminEnrollToken = async () => {

|

||||

const token = crypto.randomBytes(32).toString('hex')

|

||||

fs.writeFileSync(this.adminEnrollTokenPath, token)

|

||||

fs.chmodSync(this.adminEnrollTokenPath, 0o600)

|

||||

|

||||

const connectString = `${this.appNprofile}:${token}`

|

||||

fs.writeFileSync(this.adminConnectPath, connectString)

|

||||

fs.chmodSync(this.adminConnectPath, 0o600)

|

||||

return token

|

||||

}

|

||||

|

||||

|

|

@ -104,6 +114,7 @@ export class AdminManager {

|

|||

}

|

||||

|

||||

PromoteUserToAdmin = async (appId: string, appUserId: string, token: string) => {

|

||||

this.log(`Attempting to promote user ${appUserId} to admin.`)

|

||||

const app = await this.storage.applicationStorage.GetApplication(appId)

|

||||

const appUser = await this.storage.applicationStorage.GetApplicationUser(app, appUserId)

|

||||

const npub = appUser.nostr_public_key

|

||||

|

|

@ -114,15 +125,21 @@ export class AdminManager {

|

|||

try {

|

||||

actualToken = fs.readFileSync(this.adminEnrollTokenPath, 'utf8').trim()

|

||||

} catch (err: any) {

|

||||

this.log(ERROR, `Failed to read admin enroll token from ${this.adminEnrollTokenPath}:`, err.message)

|

||||

throw new Error("invalid enroll token")

|

||||

}

|

||||

if (token !== actualToken) {

|

||||

this.log(ERROR, `Provided admin token does not match stored token.`)

|

||||

throw new Error("invalid enroll token")

|

||||

}

|

||||

this.log(`Token validated. Writing admin npub ${npub} to ${this.adminNpubPath}`)

|

||||

fs.writeFileSync(this.adminNpubPath, npub)

|

||||

this.log(`Unlinking enroll token at ${this.adminEnrollTokenPath}`)

|

||||

fs.unlinkSync(this.adminEnrollTokenPath)

|

||||

this.log(`Unlinking connect file at ${this.adminConnectPath}`)

|

||||

fs.unlinkSync(this.adminConnectPath)

|

||||

this.adminNpub = npub

|

||||

this.log(`User ${npub} successfully promoted to admin in memory.`)

|

||||

}

|

||||

|

||||

CreateInviteLink = async (adminNpub: string, sats?: number): Promise<Types.CreateOneTimeInviteLinkResponse> => {

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@ import { Application } from '../storage/entity/Application.js';

|

|||

import { ApplicationUser } from '../storage/entity/ApplicationUser.js';

|

||||

import { NostrEvent, NostrSend, SendData, SendInitiator } from '../nostr/handler.js';

|

||||

import { UnsignedEvent } from 'nostr-tools';

|

||||

import { NdebitData, NdebitFailure, NdebitSuccess, NdebitSuccessPayment, RecurringDebitTimeUnit } from "@shocknet/clink-sdk";

|

||||

import { NdebitData, NdebitFailure, NdebitSuccess, RecurringDebitTimeUnit } from "@shocknet/clink-sdk";

|

||||

|

||||

export const expirationRuleName = 'expiration'

|

||||

export const frequencyRuleName = 'frequency'

|

||||

|

|

@ -100,7 +100,7 @@ const nip68errs = {

|

|||

6: "Invalid Request",

|

||||

}

|

||||

type HandleNdebitRes = { status: 'fail', debitRes: NdebitFailure }

|

||||

| { status: 'invoicePaid', op: Types.UserOperation, app: Application, appUser: ApplicationUser, debitRes: NdebitSuccessPayment }

|

||||

| { status: 'invoicePaid', op: Types.UserOperation, app: Application, appUser: ApplicationUser, debitRes: NdebitSuccess }

|

||||

| { status: 'authRequired', liveDebitReq: Types.LiveDebitRequest, app: Application, appUser: ApplicationUser }

|

||||

| { status: 'authOk', debitRes: NdebitSuccess }

|

||||

export class DebitManager {

|

||||

|

|

@ -181,7 +181,7 @@ export class DebitManager {

|

|||

const app = await this.storage.applicationStorage.GetApplication(ctx.app_id)

|

||||

const appUser = await this.storage.applicationStorage.GetApplicationUser(app, ctx.app_user_id)

|

||||

const { op, payment } = await this.sendDebitPayment(ctx.app_id, ctx.app_user_id, req.npub, req.response.invoice)

|

||||

const debitRes: NdebitSuccessPayment = { res: 'ok', preimage: payment.preimage }

|

||||

const debitRes: NdebitSuccess = { res: 'ok', preimage: payment.preimage }

|

||||

this.notifyPaymentSuccess(appUser, debitRes, op, { appId: ctx.app_id, pub: req.npub, id: req.request_id })

|

||||

return

|

||||

default:

|

||||

|

|

@ -211,7 +211,7 @@ export class DebitManager {

|

|||

this.notifyPaymentSuccess(appUser, debitRes, op, event)

|

||||

}

|

||||

|

||||

notifyPaymentSuccess = (appUser: ApplicationUser, debitRes: NdebitSuccessPayment, op: Types.UserOperation, event: { pub: string, id: string, appId: string }) => {

|

||||

notifyPaymentSuccess = (appUser: ApplicationUser, debitRes: NdebitSuccess, op: Types.UserOperation, event: { pub: string, id: string, appId: string }) => {

|

||||

const message: Types.LiveUserOperation & { requestId: string, status: 'OK' } = { operation: op, requestId: "GetLiveUserOperations", status: 'OK' }

|

||||

if (appUser.nostr_public_key) { // TODO - fix before support for http streams

|

||||

this.nostrSend({ type: 'app', appId: event.appId }, { type: 'content', content: JSON.stringify(message), pub: appUser.nostr_public_key })

|

||||

|

|

@ -219,7 +219,7 @@ export class DebitManager {

|

|||

this.sendDebitResponse(debitRes, event)

|

||||

}

|

||||

|

||||

sendDebitResponse = (debitRes: NdebitFailure | NdebitSuccess | NdebitSuccessPayment, event: { pub: string, id: string, appId: string }) => {

|

||||

sendDebitResponse = (debitRes: NdebitFailure | NdebitSuccess, event: { pub: string, id: string, appId: string }) => {

|

||||

const e = newNdebitResponse(JSON.stringify(debitRes), event)

|

||||

this.nostrSend({ type: 'app', appId: event.appId }, { type: 'event', event: e, encrypt: { toPub: event.pub } })

|

||||

}

|

||||

|

|

|

|||

|

|

@ -165,7 +165,7 @@ export class OfferManager {

|

|||

}

|

||||

|

||||

async HandleDefaultUserOffer(offerReq: NofferData, appId: string, remote: number): Promise<{ success: true, invoice: string } | { success: false, code: number, max: number }> {

|

||||

const { amount, offer } = offerReq

|

||||

const { amount_sats: amount, offer } = offerReq

|

||||

if (!amount || isNaN(amount) || amount < 10 || amount > remote) {

|

||||

return { success: false, code: 5, max: remote }

|

||||

}

|

||||

|

|

@ -178,7 +178,7 @@ export class OfferManager {

|

|||

}

|

||||

|

||||

async HandleUserOffer(offerReq: NofferData, appId: string, remote: number): Promise<{ success: true, invoice: string } | { success: false, code: number, max: number }> {

|

||||

const { amount, offer } = offerReq

|

||||

const { amount_sats: amount, offer } = offerReq

|

||||

const userOffer = await this.storage.offerStorage.GetOffer(offer)

|

||||

if (!userOffer) {

|

||||